Every day, thousands of hours of new video content sits waiting to be discovered. Most of it lives in long-form, horizontal formats, while audiences are scrolling through vertical feeds on their phones.

Glance, a mobile-first content platform, knows this challenge well. The company processes 1-2 hour videos from sources like podcasts, news reports, movies, and web series, and transforms them into 30 to 180-second vertical clips optimized for mobile lock screens. With daily volume projected to grow from 3,500 to over 10,000 videos per day, manual editing wasn’t a realistic path forward.

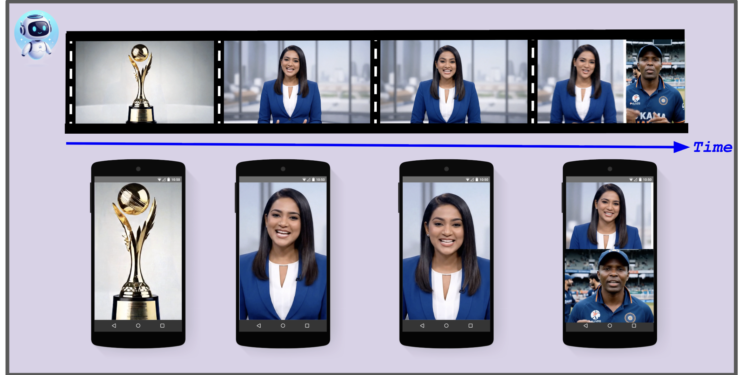

The solution also needed to go beyond simple cropping. It required the intelligence to identify and center the primary speaker, or dynamically split the screen to stack speakers vertically during conversations, preserving the context that makes content worth watching.

Here’s how Glance’s video generation solution works.

Building for the lock screen era

The goal was to create a complete pipeline that takes a long-form landscape video (16:9) and outputs multiple ready-to-publish short-form portrait videos (9:16). The solution needed to handle:

-

Key Moment Identification: Finding the most engaging 60-second segments within hours of long-form footage

-

Active Speaker Detection: Identifying who’s talking in each frame and positioning them at the top of a split screen. This includes distinguishing between a static image and a live person to ensure the crop focuses on the actual speaker.

-

Split Screen Detection: Recognizing interview layouts (common in news broadcasts) and stacking the frames vertically to preserve conversation context

-

Intelligent Reframing: Converting a multi-speaker, wide-screen shot into a focused, vertical frame without losing context

-

Dynamic Caption Highlighting: Generating word-level timestamps for “Karaoke-style” captions that increase engagement on silent-by-default mobile screens

-

Automated Branding: Applying masks, logos, and overlays programmatically to maintain brand consistency across all videos

The final technical solution uses Google Cloud Speech-to-Text v2, Gemini, and the Google Vision API, combined with custom video manipulation using Samurai (an open-source object tracking tool), OpenCV and MoviePy.

Architecture overview

The pipeline is divided into three distinct modules.