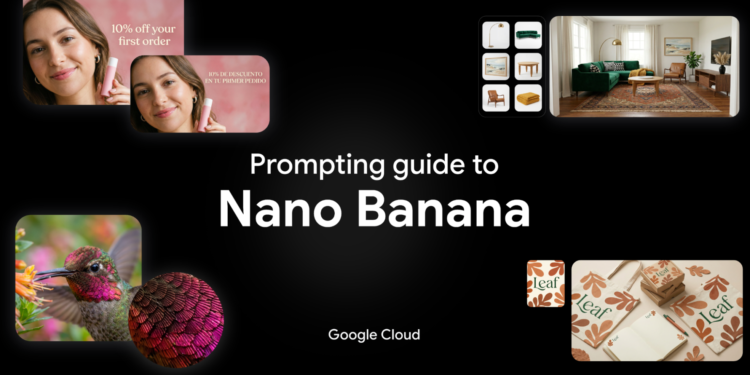

Creating precise, high-quality images often involves endless trial and error. You need a model that actually understands what you’re asking for.

Built on the Gemini 3 family of models, Nano Banana models apply deep reasoning capabilities to fully understand your prompt before generating an image. So we spent weeks testing Nano Banana 2 and Nano Banana Pro against every use case we could imagine to test its limits.

We put together this guide to share exactly what we learned and how you can get the best results.

What you’ll learn in this guide:

-

Model overview

-

Full breakdown of tech specs

-

Best practices for effective prompting

-

Prompting frameworks

-

How Nano Banana works with other creative models, Veo and Lyria.

Model overview

Nano Banana models are advanced image generation and editing models that use real-world knowledge and deep reasoning capabilities to deliver precise, rich visual results. Most recently, we announced Nano Banana 2, which shines in three ways:

-

More accurate visuals: Nano Banana 2 is powered by real-time information and images from web search. This means better educational tools, localized marketing, travel apps, and more.

-

Fast, Pro-level features: We’ve unlocked premium features – from text rendering and translations, to upscaling to 2K/4K. Now, your creative teams can build cohesive narratives, storyboards, and product mockups.

-

Precision control: Generate or edit images to fit any project requirement, with native support for 16:9, 9:16, 2:1, and more. Expect vibrant lighting and richer textures, whether you’re generating posters, marketing mockups, or ads.

Breakdown of tech specs for Nano Banana 2 and Nano Banana Pro

Before diving into prompting, here is a breakdown of what the models can handle via the API and Vertex AI (for latest details, always check the official Gemini 3 Pro Image and Gemini 3.1 Flash Image documentation):

-

Context windows: Gemini 3.1 Flash Image (Nano Banana 2) supports a maximum of 131,072 input tokens , while Gemini 3 Pro Image (Nano Banana Pro) supports a maximum of 65,536 input tokens. Both models support a maximum of 32,768 output tokens.

-

Resolutions: Built-in generation capabilities for 1K, 2K, and 4K visuals. Gemini 3.1 Flash Image adds the smaller 512px (0.5K) resolution.

-

Aspect ratios: Both models support 1:1, 3:2, 2:3, 3:4, 4:3, 4:5, 5:4, 9:16, 16:9, and 21:9. Gemini 3.1 Flash Image Preview also adds 1:4, 4:1, 1:8, and 8:1 aspect ratios.

-

Image inputs: You can mix up to 14 reference object images in a single prompt. Supported MIME types include image/png, image/jpeg, image/webp, image/heic, and image/heif.

-

Document inputs: You can input text and pdf files. The maximum file size per file is 50 MB for API and Cloud Storage imports, or 7 MB for direct uploads through the Google Cloud console.

-

Outputs: Both models output text and images.

-

Model knowledge base: Both models have a knowledge cutoff date of January 2025.

-

Live data: Both models are powered by real-time information from web search

-

Trust & safety: All generated images include C2PA Content Credentials and a SynthID watermark.

To see top features examples, check out this blog.

Best practices for effective prompting

When it comes to effective prompting, there’s a few ways to ensure the visual you get is the visual you asked for. Here are some guidelines:

-

Be specific: Provide concrete details on subject, lighting, and composition.

-

Use positive framing: Describe what you want, not what you don’t want (e.g. “empty street” instead of “no cars”).

-

Control the camera: Use photographic and cinematic terms like “low angle” and “aerial view”.

-

Iterate: Refine images with follow-up prompts in a conversational manner.

The key is to start a prompt with a strong verb that tells the model the primary operation you want to perform.

Five prompting frameworks

1. Image generation

When generating an image, your prompt structure depends entirely on whether you are using reference images or relying solely on text.

Text-to-image generation without references

When starting with a blank canvas, you are the director. A simple list of keywords won’t cut it; you need to describe the scene narratively.

Formula: [Subject] + [Action] + [Location/context] + [Composition] + [Style]

Example prompt: [Subject] A striking fashion model wearing a tailored brown dress, sleek boots, and holding a structured handbag. [Action] Posing with a confident, statuesque stance, slightly turned. [Location/context] A seamless, deep cherry red studio backdrop. [Composition] Medium-full shot, center-framed. [Style] Fashion magazine style editorial, shot on medium-format analog film, pronounced grain, high saturation, cinematic lighting effect.